As we went through our last few classes for Interface Lab we began to learn about many more new ways to create physical interactions to P5.js. This started to spark all sorts of new ideas in my head so I decided to challenge myself to build something different and new. I set about this process by playing around with different sensors to understand what the different configurations can do. I had used the rotary potentiometer in one of the labs to create a device that could move a ball on 2 axis on the screen in P5.js and I thought that it might be cool to create a device that used different types of potentiometer to control a game in P5.js, A game with a twist!

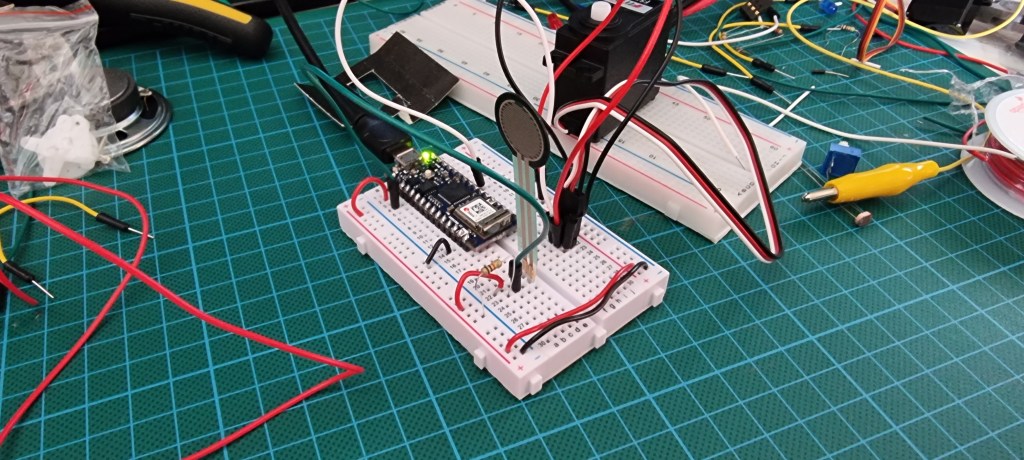

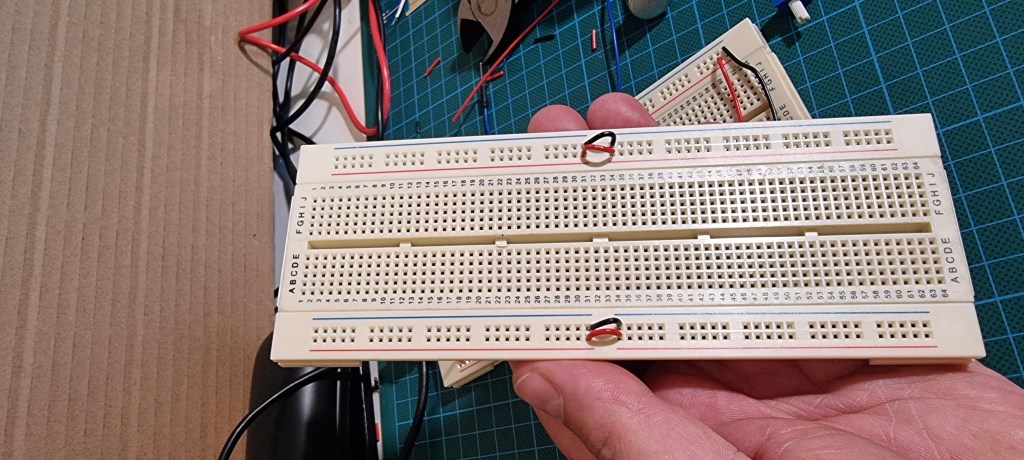

The first thing I did was to ensure that the input from the Arduino would communicate with the P5.js sketch by way of the P5.js serial app. This allowed me to begin working on a P5 sketch that could be manipulated with the sensors attached to the Arduino. I built the setup we learned from David in class and immediately ran into problems. I had not connected the lower part of the bread board to the upper part of the bread board and nothing on the lower part would function! Luckily I got some help with this and I was able to move forward.

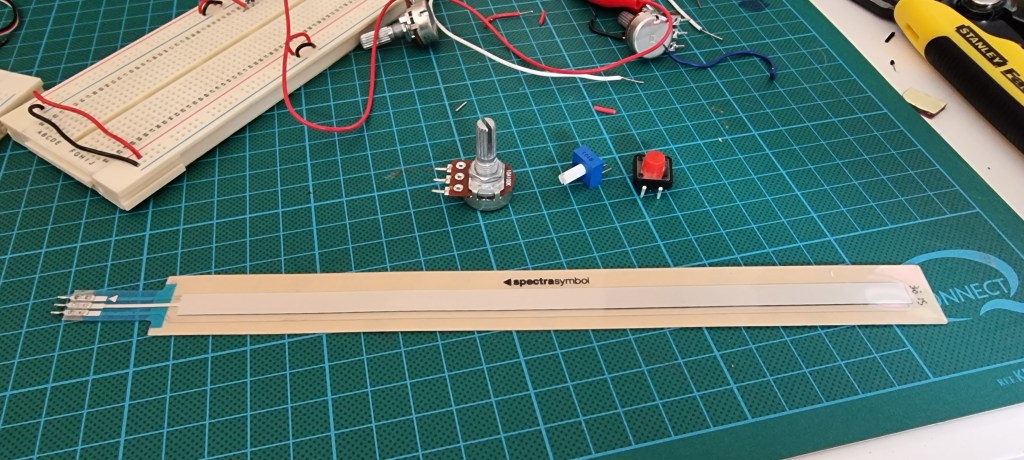

Once this setup was functioning I was able to swap out sensors to see what would or would not work. Previously I had used an LDR sensor in place of and FSR sensor which I was able to explore concept ideas with however the rotary potentiometer required a bit more work to swap out if I wanted to use an LDR or FSR sensor for that matter.

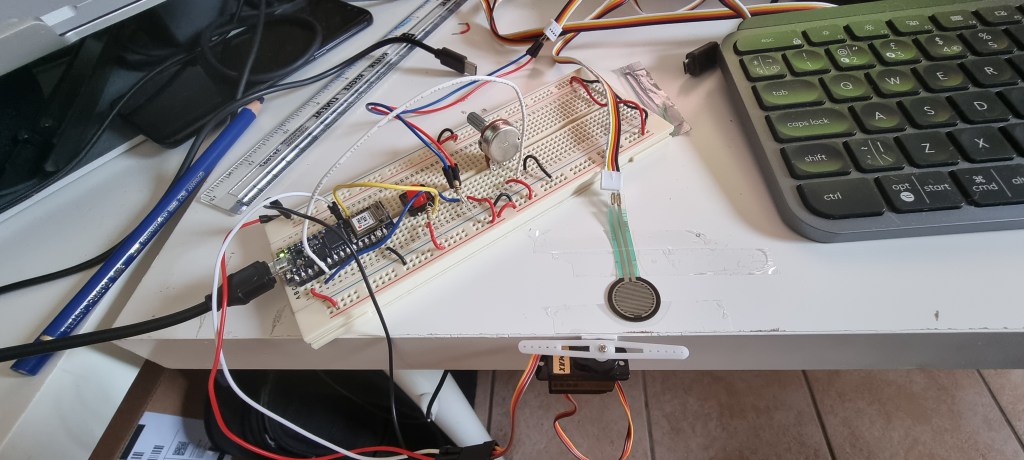

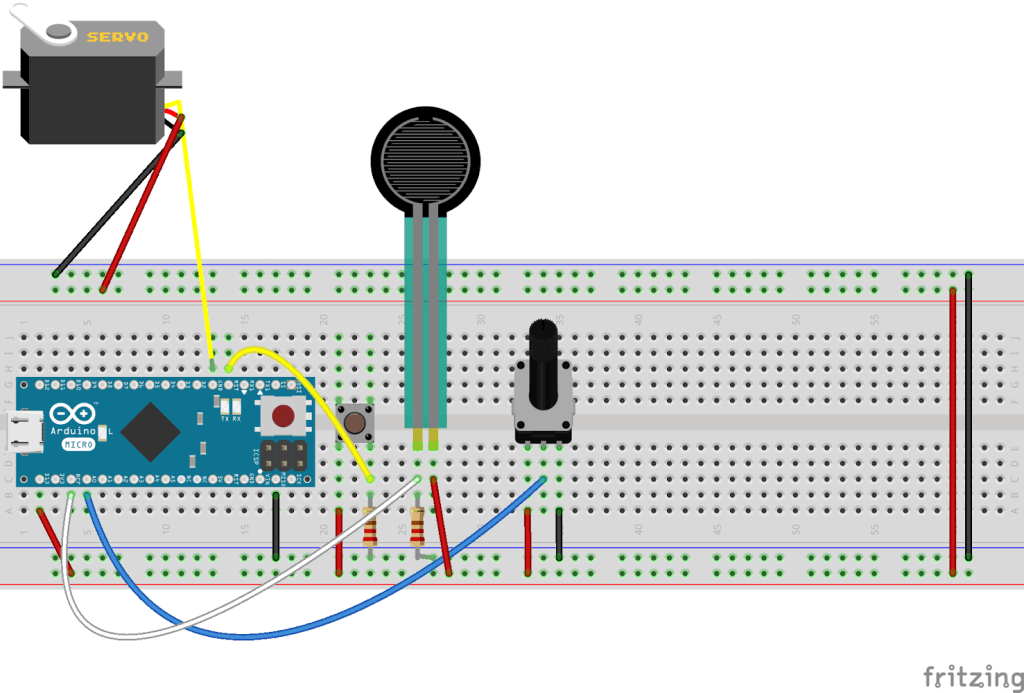

It was about putting a resistor in line for the power to the sensor and setting it up a little differently. With a lot of effort I got the FSR to connect. At this stage I had 3 different inputs, a rotary potentiometer, an FSR and a button all connected next I had to make sure that there was reading coming from all three. Opening the serial port and creating print values in the Arduino IDE I was able to get a read out that let me know the sensors were functioning. I wanted to use the FSR for an idea I had but I did not realize how much work would be involved in executing it!

Now I had my Arduino set up, I had my sensors giving a reading, I had the Arduino connecting to P5.js and I had a basic sketch working that was acting based on the input from the sensors. What I wanted to create was a game that was a little like pong except in reverse. Basically a paddle bounces up and down the y axis of the screen and the objective is to get a ball past the paddle into the end zone to win the game. Except you can never win this game! More on this later!

As part of the losers game I needed to ensure that there was no way for the player to get the ball to the end zone. How I was going to achieve this was by creating an enemy that never lets you get the ball to the end zone. This was going to be the second part of my Arduino struggle. It was also the reason I used the FSR to create the input for moving the ball along the x axis. Pressing the FSR moves the ball along the x axis to the end zone. Releasing the pressure or force on the FSR the ball returns to the other end of the screen. I needed to create an interaction that stopped the FSR being effective this is when I turned to my servo motor!

I hooked up the servo on another pin on the Arduino and then connected the FSR to the Arduino too. Fairly basic but still a job for me given my limited experience. I got the FSR to activate the servo and then began trying to figure how to map it correctly. This took a bit of trial and error. Then it was about transplanting this code into the other Arduino sketch in a method I refer to as the Frankenstein. This is where I cobble together a bunch of code and hope for the best and then when it inevitably doesn’t work try to solve the problems through the process of elimination.

I managed to get the Frankenstein monster to work but it was not working with the P5 sketch! Many hours and a lot of head scratching I went back to tutorials on how I could get it to work. My big issue was forgetting to put in the correct characters and switching between Arduino IDE and P5.js caused me to do things incorrectly. I found slowing down to a snails pace and checking everything several times made things more smooth, slow is smooth and smooth is fast! Well at least I hope that’s how it will go in future.

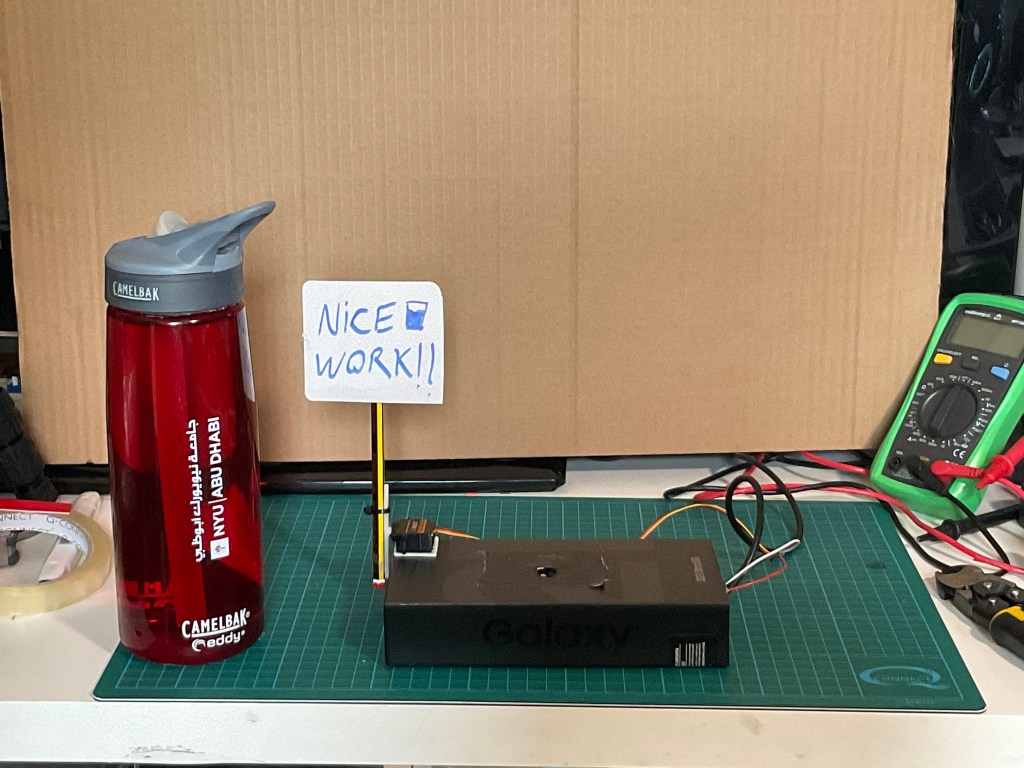

After some disasters and misfires with the code I got the FSR to activate the servo whilst simultaneously moving the ball in P5.js. I then made a very basic prototype of the system which I taped to my desk to test. I had a family member try it and seen the flaws in the interaction with the physical element. Next stage is to get the mapping for the FSR and servo to correspond with the distance the ball moves. Once this has been achieved I can start creating the enclosure.

UPDATE

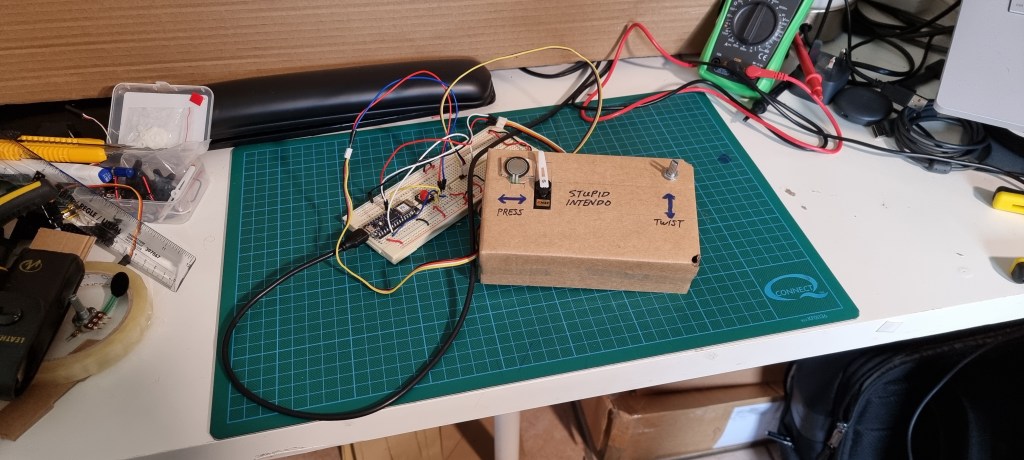

I got the enclosure worked out and attached the servo, potentiometer and FSR to the enclosure. I tried to take into account ergonomic considerations.

Once I had managed to do this I had to test the game again. There is a lot of interference from the cables being all bunched up inside the enclosure but it was still as frustrating to play as intended!

Finally some instructions on how to use the controller were the final touches.

This has been a really fun project to work on, I really enjoyed the use of the different skill sets we learned over the past 5 weeks and consolidating the efforts into this piece of whimsical art.